Like… you need to simulate what the population may look like and then you need to simulate what the sampling behaviour may be… too much simulating, LoL. But this approach has always made me feel kinda awkward because it’s like you’re “double-dipping” in your simulation. So it stands to reason that the average SE should be close to this quantity, even if it was derived empirically through simulation and not analytically. In layperson’s terms, the standard deviation of the sampling distribution of the coefficients is the standard error. (3) Compare the mean SE to the SD of the coefficients. (2) Calculate the mean of the SEs and the standard deviation of the coefficients.

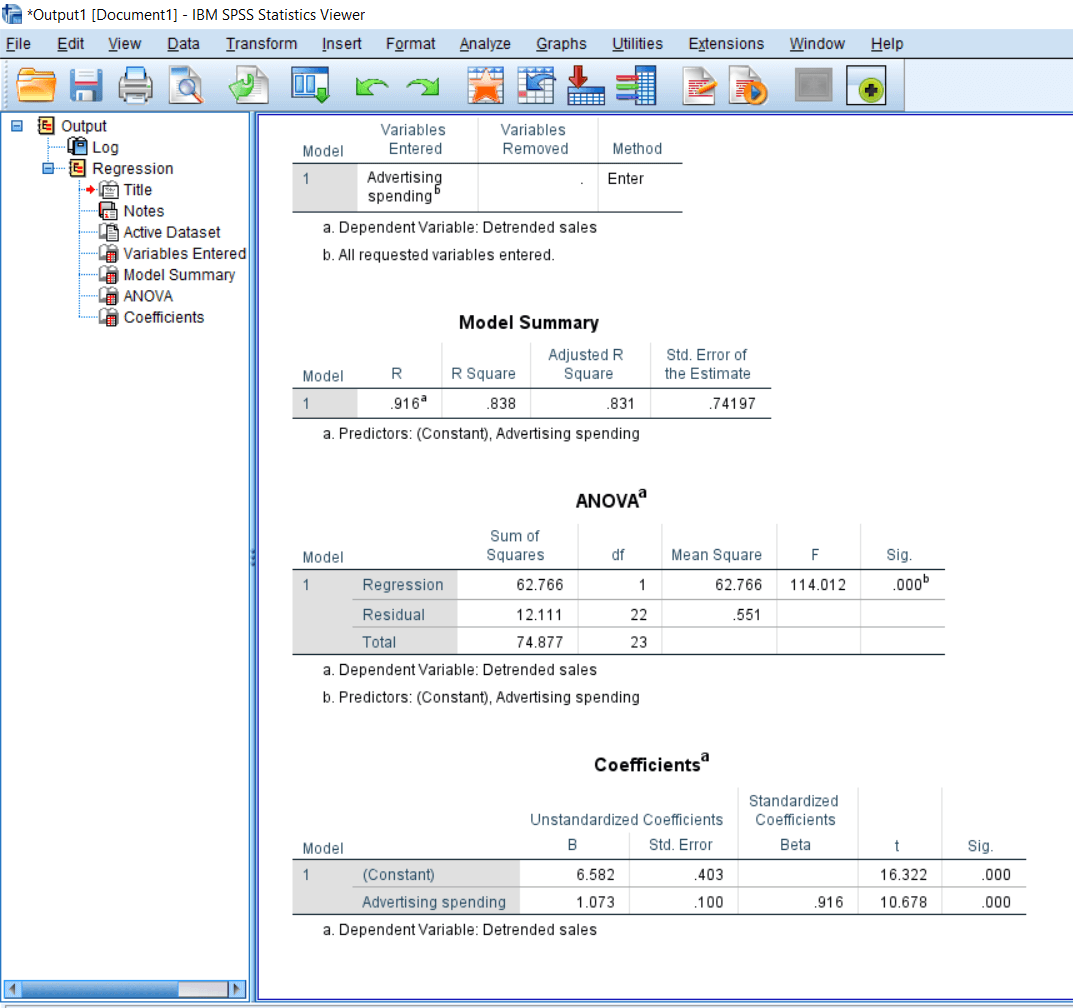

(1) Run your simulation and save both regression coefficients and their standard errors. So…the only way I knew at first about how to assess SEs was in three steps: For the remaining of this article every time I talk about “standard errors” I’m most likely referring to the standard errors of regression coefficients within OLS multiple regression. If they shrink or increase under violations of assumptions then we know something is going to be wrong with our p-values and Type I error rates and yadda-yadda. And a simple way to assess this efficiency is by looking at the estimated standard errors (SEs) of our parameter estimates. When working on ‘robustness-type’ simulations, we are usually not only interested in the unbiasedness and consistency but also the efficiency of our estimators. This is an interesting insight that I had a couple of weeks ago when I was preparing to teach my class in Monte Carlo simulations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed